Most personal-knowledge systems collapse under their own weight because they treat capture and compilation as the same activity. Treating them as distinct stages — bookmarks for capture, the vault for organisation, articles for synthesis, the static site for publication — produces a pipeline where each stage has its own discipline and the end result is durable.

Overview

The phrase “second brain” is associated with Tiago Forte and the Building a Second Brain (BASB) framework — a structured approach to capturing, organising, distilling, and expressing personal knowledge. The idea is older than the brand. Vannevar Bush imagined the memex in 1945; Niklas Luhmann’s Zettelkasten produced 90,000 interlinked notes that fed his books. The shared instinct is that a single brain is a poor substitute for a brain plus an external, persistent, queryable structure that holds what you’ve read and how it connects.

What’s changed in 2024–2026 is that the compilation layer of that pipeline became automatable. Andrej Karpathy’s LLM Knowledge Base pattern made the case explicit: drop raw sources into a folder, point an LLM at it, get back a structured wiki. The middle layer — the part that historically made personal-knowledge systems collapse under their own weight — is now machine-assisted.

This article describes a working pipeline built around that observation. The implementation is Obsidian + a few sync scripts + a static site. The principle is general: keep capture, organisation, compilation, and publication as four distinct stages, each with its own discipline and its own tool.

Capture

The capture layer is whatever puts raw material in front of you, then preserves it.

For me that’s four streams:

- X/Twitter bookmarks — exported via Dewey, which produces a CSV that a small Python script ingests into the vault as one markdown file per bookmark, with author, date, source URL, category, and topic tags in YAML frontmatter

- RSS feeds — managed in the RSS Dashboard plugin inside Obsidian itself; ~80 sources across research preprints, lab blogs, news, and developer commentary

- Academic articles — preprints and journal papers pulled into the vault as PDFs (or, where available, machine-readable HTML) alongside their citation metadata. The RSS layer surfaces what gets announced; this stream captures what gets read. Papers go into a section’s

raw/folder when they’re earmarked for compilation, or into a generalLibrary/when they’re reference material rather than active source for an article - Web clips and conversation exports — long-form pieces that warrant a full read go into a

raw/folder under whichever wiki section they belong to, ready for compilation

The discipline at this stage is to capture broadly and judge later. Anything that even suggests a thread worth following gets caught. The cost of an unread bookmark is zero; the cost of missing the link between two ideas because one of them never made it into the system is high.

Organise

Once material is captured, the vault’s job is to make it findable.

I use a flat tag taxonomy — categories like AI-and-LLMs, Politics-and-Geopolitics, Programming-and-Dev-Tools, etc. — combined with namespaced topic tags (topic/claude-code, topic/rag, topic/legal-nlp). Bookmarks land tagged automatically based on keyword matching against the existing taxonomy; edge cases get reviewed and re-categorised manually.

Two subtler decisions matter here:

- One source, one location. A bookmark lives in

TWEET ARCHIVE/. A paper lives under the wiki section where it belongs. A conversation export lives in_Archive/. If a piece of material exists in two places, both versions decay; if it lives in one, the location is implicitly part of its meaning. - The vault is canonical. When something gets published — to a blog, a paper, a slide deck, a static site — the published artefact is downstream of the vault, never upstream. The vault is what gets backed up; the artefacts are derivable.

Compile

The compile layer is where a wiki actually grows. It’s also the layer most people skip.

I run two wikis under the same pipeline: an AI Wiki and a Legal Tech Wiki. Each has the same structure:

- Articles — 500–1500 words, narrative synthesis across multiple sources, with primary citations

- Concepts — 100–300 words, glossary-style definitions, used as cross-references inside articles

Compilation runs in two modes. The deliberate mode starts with a known batch of sources and an intent: I have these five Karpathy threads from April; what’s the article they’re trying to be? The exploratory mode starts looser — scan recent bookmarks for fits with the existing wiki — and lets the structure emerge from a conversation with Claude. Both modes end at the same gate.

The non-negotiables at this stage:

- Every claim is traceable. No statement enters an article without a link back to the source it came from. Where a popular figure turns out to be misattributed, the entry says so and traces the actual source — see Semantic Collapse for a worked example.

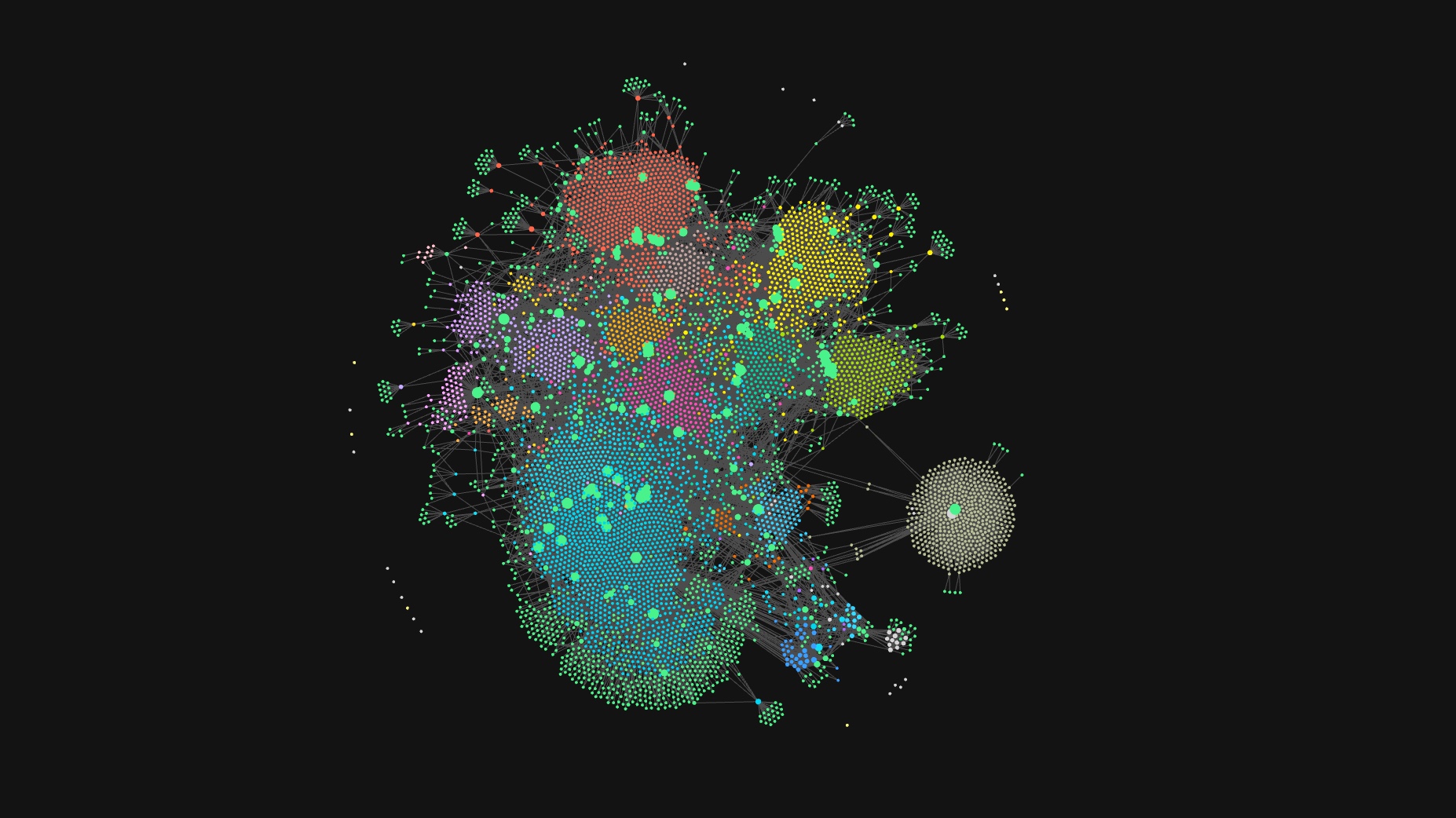

- Wikilinks for cross-reference, external links for attribution. Internal

*concepts*build the graph;[author](source-url)builds the citation chain. - Drafts stay drafts until they earn

published. A flip of one frontmatter field is the only action that moves an article into the public site.

Excavation and architecting

The phenomenology of synthesis-with-an-LLM resists the two ways people usually describe AI-assisted writing. It is not autocomplete — the model is not finishing my sentences. It is also not delegation — I am not handing the model a prompt and accepting its output. The closer descriptions are excavation and architecting, and they happen in alternation, sometimes inside a single sentence.

Excavation happens when a passage in something I’m reading surfaces a conceptual link to another piece I’ve read. The link feels found rather than made. The model is part of how it gets surfaced — it has been holding several long sources in working memory while I have been reading one carefully — but the connection itself feels latent in the material. We are both pulling at the same thread; whoever spots it first names it. The piece on Karpathy’s three April threads came together this way: the capability gap and the cognitive core and the harness mindset are not obviously the same argument until you put them next to each other, and once you do, the shape they form together is — in retrospect — the only shape they could have formed.

Architecting happens immediately after. Once a link is found, the article is not yet written — it is one of several possible articles. The decision of which thread is the spine and which are the ribs, which detail leads and which sits in the middle, which sentence opens and which one waits for the second paragraph, is constructive work that does not have a single right answer. The conversation with the model here takes a different shape: I propose, the model probes the proposal, I revise. The model has no investment in the piece being one thing rather than another, which is freeing — the editorial conviction stays mine, but the search space of possible structures is genuinely shared.

The tension between the two metaphors is the point. Excavation suggests the meaning is already in the sources, waiting to be uncovered. Architecting suggests you are imposing structure that did not exist before. Both are true at different moments in the same hour. The risk in either metaphor alone is that you flatter the process: pure-excavation talk treats the model as a search tool; pure-architecting talk treats it as a structurally creative partner. The honest description is that some sentences are excavated, some are architected, and the boundary between them is hard to see while you are inside the conversation.

What this changes about the work, in practice, is that the cognitive bottleneck moves. In solo writing the bottleneck is holding enough sources in mind at once to see the link. In co-creative writing the bottleneck is deciding which of the links the model surfaces is worth following. That is editorial judgement, and it is the skill that does not transfer to the model. A piece comes out well not because the right link gets found — many do — but because the right link gets kept, the wrong ones get dropped, and the structure that holds the kept links sits naturally with the source material.

It also changes what the work feels like. Solo synthesis at this scale is exhausting in a specific way: the working memory required to keep several long arguments simultaneously alive is roughly the work. Co-creative synthesis is exhausting in a different way: working memory is cheap and editorial discrimination is the load. You spend more of your attention on what to discard, and less on what to retain.

Publish

The publication layer is the smallest and most boring tier of the system, which is by design.

A static site (Astro) reads the vault. A sync script filters on tags: ai-wiki AND status: published, transforms wikilinks to URLs, rewrites Obsidian callouts to HTML, and writes one markdown file per article into the site’s content folder. The site is rebuilt; deployment is automatic.

Three properties matter:

- The site is a view of the vault, not a parallel store. Hand-edits to published files are forbidden — they’d be wiped on the next sync. The vault stays authoritative.

- Status is a single field. Promoting or demoting an article is one frontmatter edit and one script run. There is no separate publishing UI to maintain.

- Drafts are invisible. Anything not marked

publishedsimply does not exist as far as the public site is concerned. This makes “publish when ready” the default state, not a workflow exception.

Why publish

A second-brain system that stays private is still useful — for many people, it’s enough. The argument for publishing is more specific: it is the citation discipline that pays off, not the audience.

Most online writing on AI inherits its facts from other writing rather than from the underlying paper. Stanford did not “prove that RAG fails at 50,000 documents.” Karpathy did not say “90% of AI advice will be dead in 6 months” without context. These claims propagated through tweet-of-tweet summaries until the original source was unfindable. Writing for publication forces a different posture: every figure has to come from somewhere, and where the chain breaks, you say so on the page.

The site that emerges is, partly, an existence proof of its own methodology. The articles are simultaneously synthesis and demonstration: here is what the technique produces, evaluated by what you’re now reading.

Limitations and open questions

- Capture has a hoarding pathology. Roughly 4,700 bookmarks sit in the vault; a tiny fraction will ever be compiled. The system tolerates this because the cost of carrying unread material is low — but the rate of capture has to be sustainable, not maximal, or the vault becomes a graveyard.

- The compilation stage is the bottleneck. Capture is automated; publication is automated; compilation is hand-driven dialogue with an LLM. That stage has not been mechanised, and it should not be — quality at the synthesis layer is what justifies the rest of the pipeline.

- Provenance for tweet sources is fragile. Tweets get deleted; profiles get suspended. A long-term archive needs to mirror key quotes inline rather than relying on the source link surviving. Currently the pipeline accepts link rot; this is a known weakness.

- The wiki is not a full memory of “what I’ve read”. It is a memory of what I’ve compiled. Plenty of useful reading never makes it through the compile stage and effectively disappears from the system, even though the bookmark survives. That’s a feature in some readings (only finished thinking gets recorded) and a bug in others.

Practical applications

For a researcher, lab, or institution considering this pattern:

- Pick the four stages explicitly. Do not let capture and compile run together; they have different rhythms and different rewards.

- Make the publication gate cheap. A single frontmatter field is enough; anything more becomes a chore that prevents publishing.

- Resist the urge to build a custom CMS. Markdown in a vault, plus a static site generator, plus one sync script, is robust because each component is replaceable. Anything more bespoke becomes maintenance debt.

- Privilege citation chain length. A wiki where every claim is one click from its source has structural integrity that the popular-summary-of-popular-summary literature lacks — and the discipline is most of the work.